Machinery is increasingly used in the newspaper industry, which directly affects the work of reporters and editors. Let’s discuss with Shaping Futures about this and assess how it will impact our future?

About a third of the content published in Bloomberg News is automated technology. The company’s Cyborg system can assist reporters to produce thousands of articles and report the results of companies in each quarter.

No tired, accurate, and not complaining, Cyborg helped Bloomberg in the race with Reuters, a direct competitor in the field of business financial journalism.

Apart from news about business results, Cyborg also helped Bloomberg give some news about sports tournaments or earthquakes …

More and more newspapers use artificial intelligence to serve their work. Last week, the Australian version of The Guardian published the first article supported by robots; and Forbes recently announced that they are testing a tool called Bertie to provide reporters with a rough draft of the articles.

The use of artificial intelligence is becoming part of the journalism industry, but this is not a threat to reporters. Instead, this idea allows journalists to spend more time on real work.

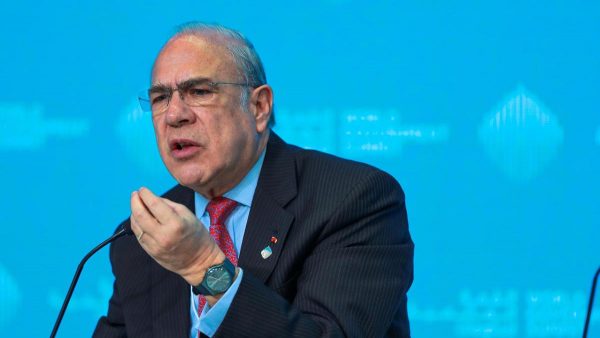

“The work of journalism is creative, it’s about curiosity, it’s about storytelling, it’s about digging and holding governments accountable, it’s critical thinking, it’s judgment — and that is where we want our journalists spending their energy,” said Lisa Gibbs, the director of news partnerships for The A.P.

A.P., The Post and Bloomberg also set up internal alerts to signal anomalous data bits. Reporters who see these warnings can write bigger stories.

For example in the Olympics, The Post has set up Slack alerts, the workplace messaging system to notify editors if the results are more or less than 10% of the world record.

But machine-generated stories are not infallible. For an earnings report article, for instance, software systems may meet their match in companies that cleverly choose figures in an effort to garner a more favorable portrayal than the numbers warrant. At Bloomberg, reporters and editors try to prepare Cyborg so that it will not be spun by such tactics.

AI becomes a productivity tool in reading reports and finding clues. When performing data analysis, AI can help detect abnormal factors.

A few years ago, AI was only used in high-tech companies, but now it really has become an essential need; said Francesco Marconi, the head of research and development at The Journal. “I think a lot of the tools in journalism will soon be powered by artificial intelligence.”

Mr. Marconi of The Journal agreed, likening the addition of A.I. in newsrooms to the introduction of the telephone. “It gives you more access, and you get more information quicker,” he said. “It’s a new field, but technology changes. Today it’s A.I., tomorrow its blockchain, and in 10 years it will be something else. What does not change is the journalistic standard.”

According to Marc Zionts, the chief executive of Automated Insights, machines need a long way to replace flesh-and-blood reporters and editors.

“If you are a non-learning, non-adaptive person — I don’t care what business you’re in — you will have a challenging career,” Mr. Zionts said.

In addition to giving reporters more time to pursue their interests, machine journalism comes with an added benefit for editors.

“One thing I’ve noticed,” said Mr. St. John, “is that our A.I.-written articles have zero typos.”

With the purpose of ensuring AI’s future, the Michael Dukakis Institute has launched the AIWS Initiative, including the AIWS 7-Layer Model for ethical AI and concepts for the design of AI-Government, which has received the support of Paul Nemitz.