Are we letting AI make Life-or-Death Judgments?

At the Cybernetic AI Self-Driving Car Institute, AI software for self-driving cars is being developed. One crucial aspect to the AI of self-driving cars is the need for the AI to make “judgments” when in specific driving situations; some of them might lead to life-and-death outcome.

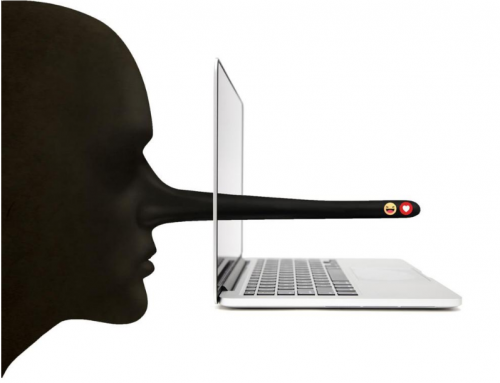

During our driving, it is possible to encounter unexpected situation, indicated by many factors: traffics, sign, pedestrians, and the terrain many actions can be taken result in multiple outcomes and we only have seconds to decide with the balance of people’s lives at stake. In the analysis given by Dr. Lance Eliot, CEO, Techbrium Inc. and the AI Trends Insider, in a circumstance, as we are driving on the highway with cars behind and in other lanes, then suddenly a shadowy figure of a pedestrian appears. In this case, no matter what the action is, casualty and damage is inevitable as we might hit the pedestrian or other cars. It is said that AI driver could experience the same situation human driver is involved in. Despite arguments says that self-driving car will ensure nothing like that will happen, but it is impossible to tell during the limitation of sensors, and incompletion of technologies.

Should we leave the decision making to AI?

If we do, we need to design tests for ethical decision-making or judgment of AI to avoid potential destruction, since automated softwares do not have human sense of reasoning, it will not be able to perform action when the situation arises. Furthermore, we tackle another challenge in term of ethics when comes to factor prioritizing. How can we tell which one is the best course of action when a certain moment occurs?

To avoid similar case in the future, we should not leave this to the technology developer; an Ethics Review Board should be used. Ethics Review Boards might be established at a federal level and/or a state level. They would be tasked with tasks of trying to guide how the AI should do when encountering moments (providing the policies and procedures, rather than somehow “coding” such aspects). They might also be involved in incident assessment with AI self-driving cars going outside the scope of the learned knowledge.

As technology is developing in unprecedented speed, not many people pay attention to the ethics. Regarding the automation of car, it will also require careful consideration and sampling and to figure out monitoring regulations; and the ethical framework for AI which is something that Michael Dukakis Institute’s experts in partnership with AI Trends are working on.