Boston, December 9, 2024 – As part of the AI Action Summit, which will take place in Paris on February 10–11, 2025, France has launched the “AI Convergence” Challenges to showcase the dynamism of academic and industrial ecosystems and the global foundation of their innovations.

The goal of these challenges is to promote projects that tackle ambitious technological problems or societal issues, demonstrating the value of AI for humanity as a whole. This initiative also represents a significant opportunity to unite ecosystems, foster a shared vision, and generate stimulating ideas and solutions around this transformative technology. AI Convergence is already underway, showcasing its potential as both an accelerator and a differentiator.

Researchers, engineers, entrepreneurs, and innovators from all sectors: if you have an ambitious project that addresses unresolved issues using AI, respond to this call by submitting your challenge! Selected projects will receive significant visibility during the summit period.

Five Themes to Address AI Challenges

The challenges aim to foster innovative solutions in the following priority areas of the Summit:

- Public Interest AI

- Future of Work

- Innovation and Culture

- Trust in AI

- Global AI Governance

The AI Action Summit, hosted by France on February 10–11, 2025, will spotlight concrete actions ensuring that AI development and deployment benefit society, the economy, and the environment while upholding the common good. The summit will bring together Heads of State and Government, leaders of international organizations, CEOs of small and large companies, representatives of academia, non-governmental organizations, artists, and members of civil society.

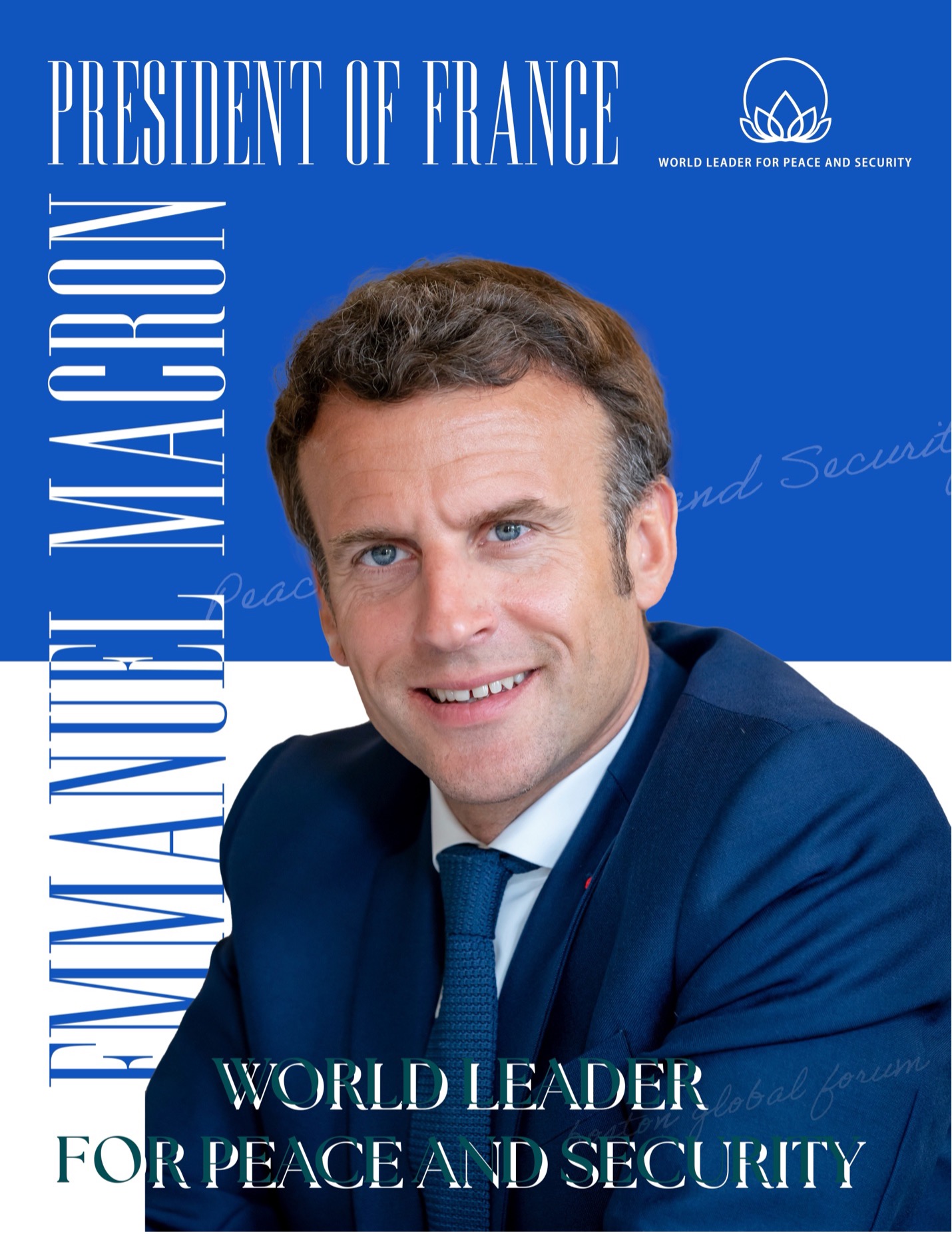

In his acceptance speech for the 2024 World Leader for Peace and Security Award, organized by the Boston Global Forum on November 25, 2024, at Harvard University’s Loeb House, President Macron shared his vision for global collaboration and technological advancement:

“I invite you all to Paris for our Summit for Action on AI in February: economic actors, decision-makers, and thinkers—we must create global consensus and make decisions at the right pace and on the right scale.

In this regard, your quest for Enlightenment on a global scale can only resonate in France, in this pursuit of progress against all forms of obscurantism.

I believe in our collective capacity to put technology at the service of protecting our democracies and to forge a new era of prosperity, freedom, and stability in the world. I believe, like you, that we cannot and must not renounce our humanism and our Enlightenment ideals for the coming century.

Thank you all for your unwavering commitment.

Thank you for this award, which honors France and compels us to act collectively starting this February in Paris.”

In alignment with President Macron’s vision, the Boston Global Forum will contribute to the upcoming AI Action Summit with its pioneering initiative, “AIWS Government 24/7”. This initiative aims to support continuous, around-the-clock governance through advanced artificial intelligence, enhancing decision-making and operational efficiency for global leaders.